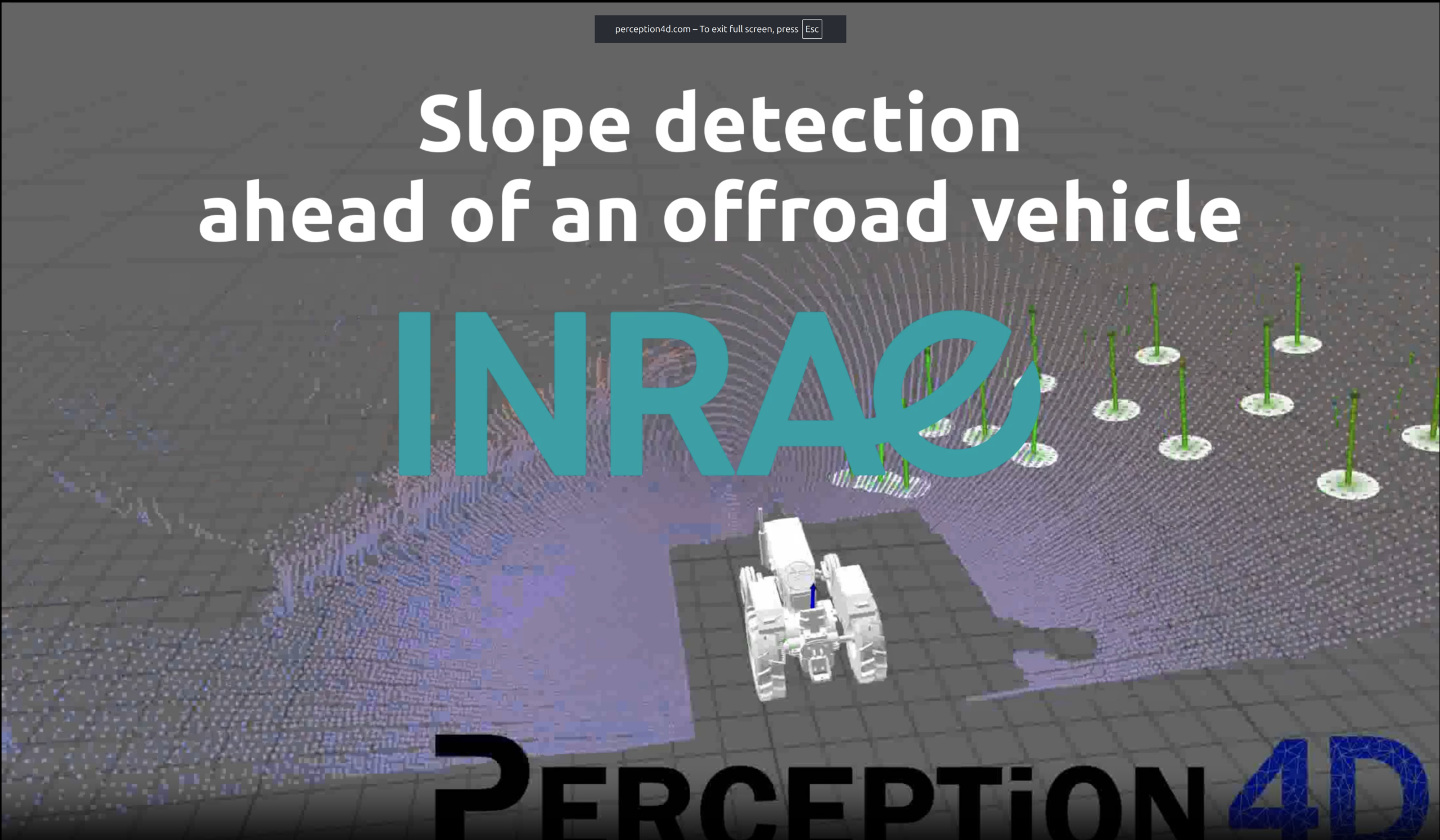

In one of our latest projects, we were thrilled to work with INRAE Clermont-Auvergne-Rhone-Alpes on a critical initiative: detecting dangerous slope angles in real-time along a tractor’s future trajectory using onboard LiDAR. The goal? Prevent rollovers on steep terrain by giving the tractor’s control system early warnings about hazardous inclines.

Why it’s important: Tractor rollovers are a major safety risk in agriculture. By anticipating slope changes ahead of the vehicle, we can help operators and automated systems avoid dangerous situations—improving both safety and operational efficiency.

How we made it happen:

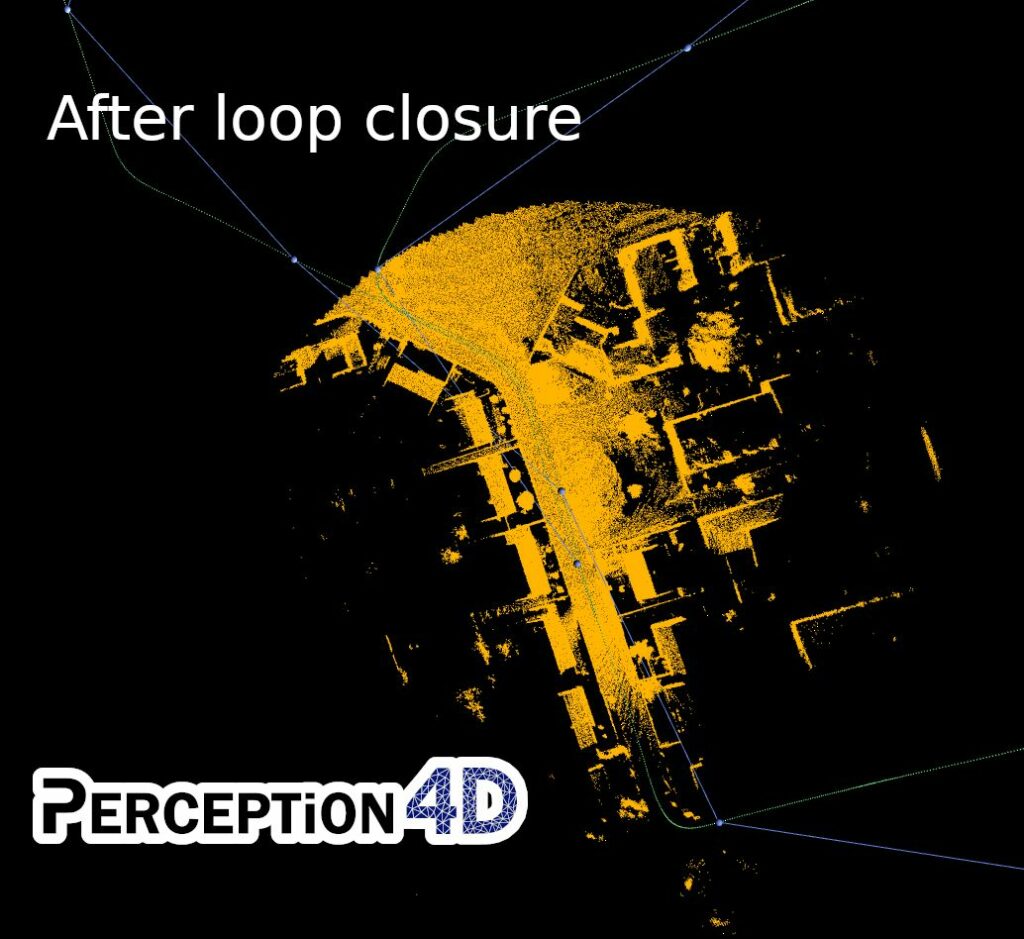

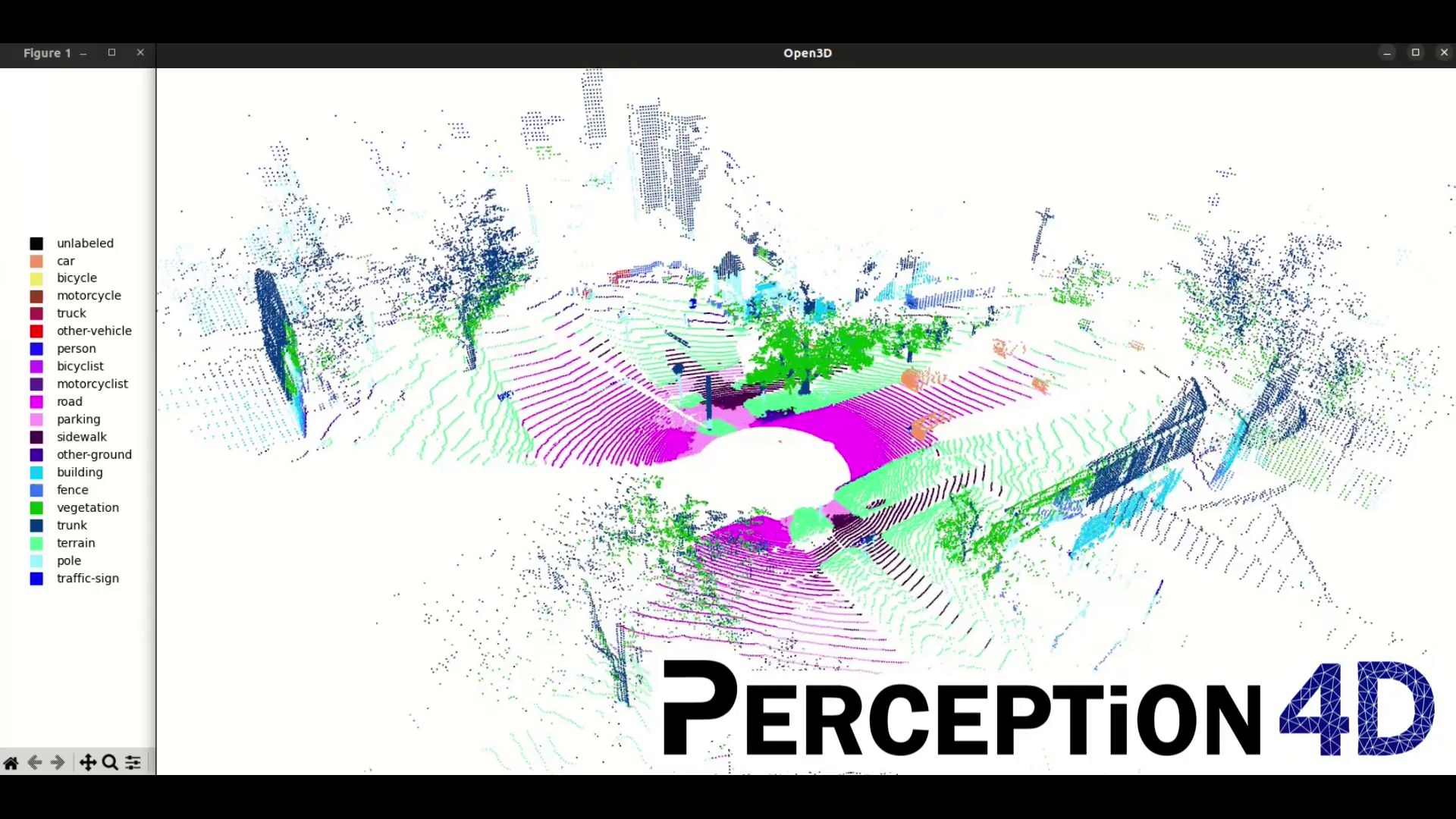

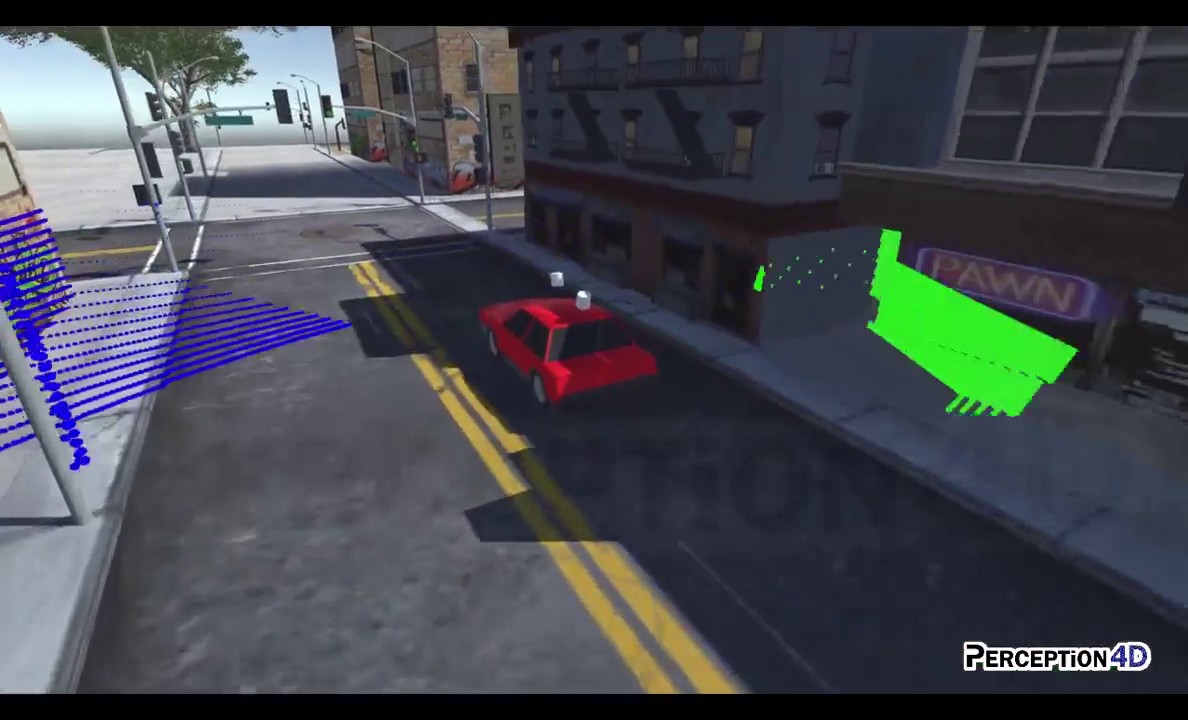

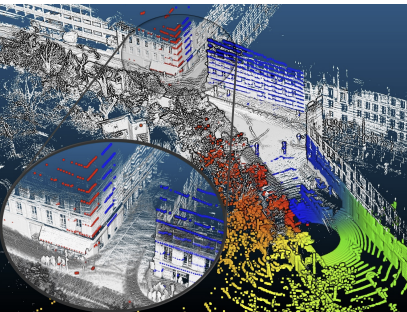

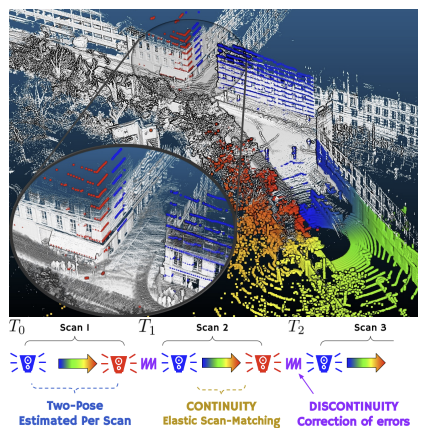

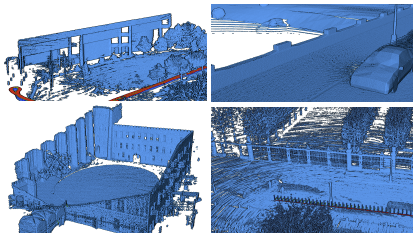

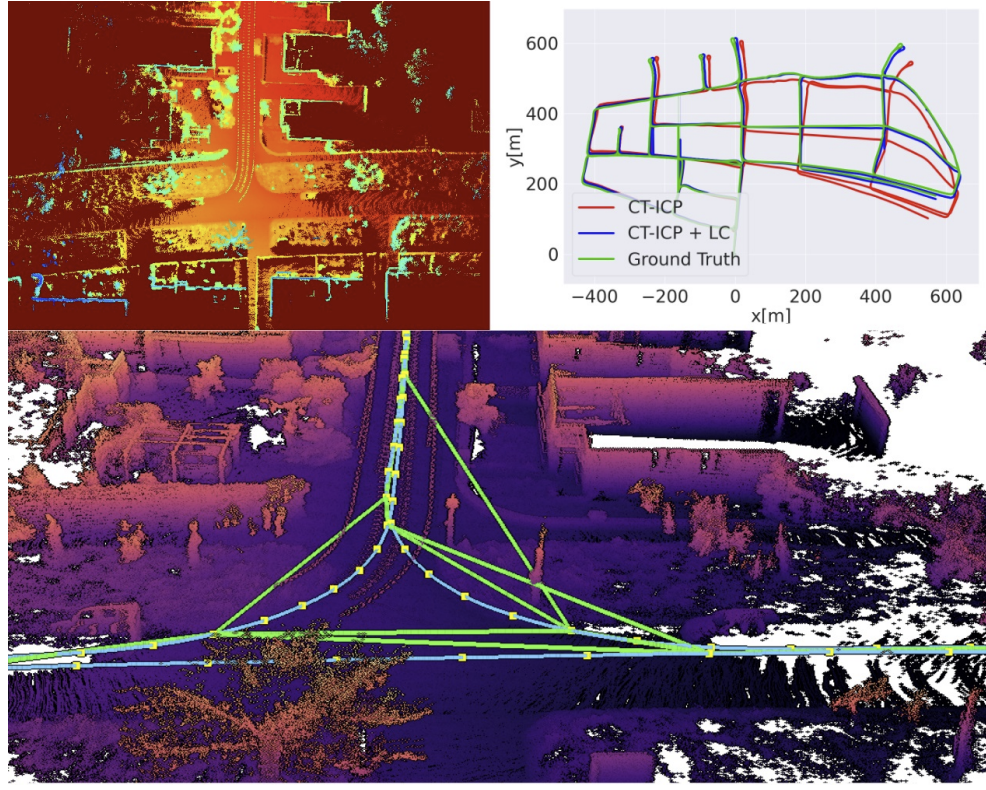

- LiDAR + Point Cloud Analysis: We started by helping select the right LiDAR and optimize its positioning on the vehicle. Then, we processed dense 3D point clouds and IMU data to map the terrain and calculate surface normals, identifying slope angles in real time.

- ROS2 pipeline and CAN integration: take advantage of state of the art real time technologies and ensure seamless communication with the tractor’s control unit.

- Rapid Iteration: Thanks to close collaboration with INRAE, we quickly refined the solution to match exactly their needs and specifications.

See it in action in the video below: The tractor’s projected trajectories, the LiDAR point clouds transformed back in the environment’s frame and the slope normals colored by potential rollover risks—all in real time!

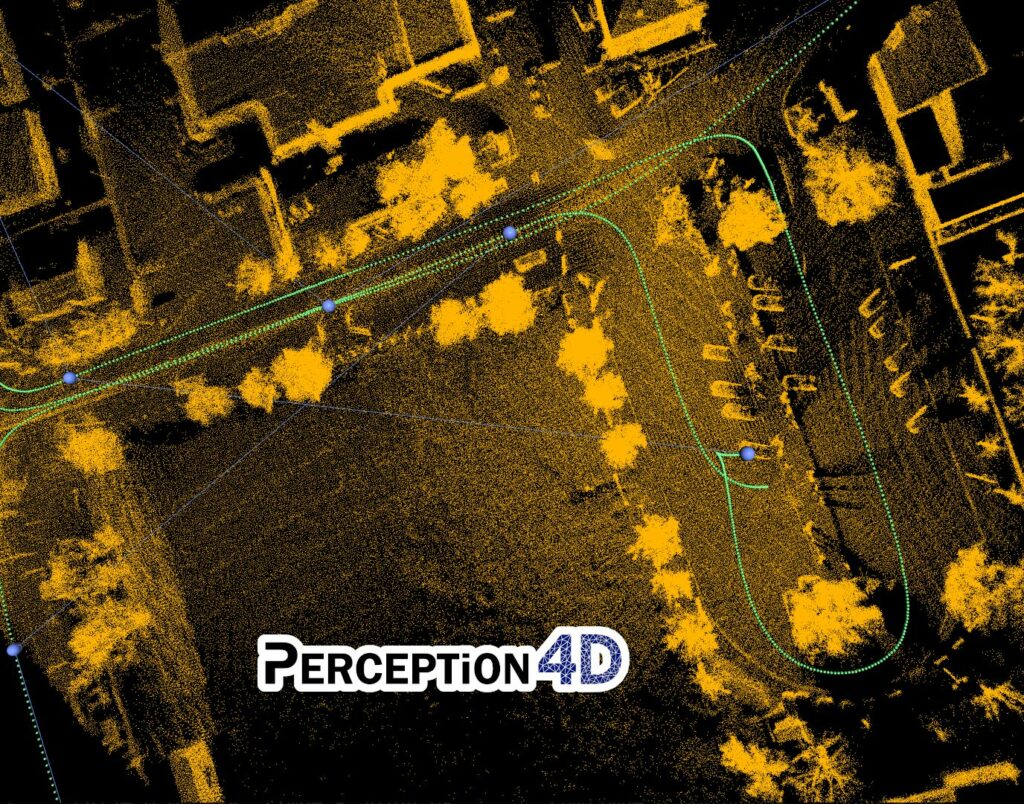

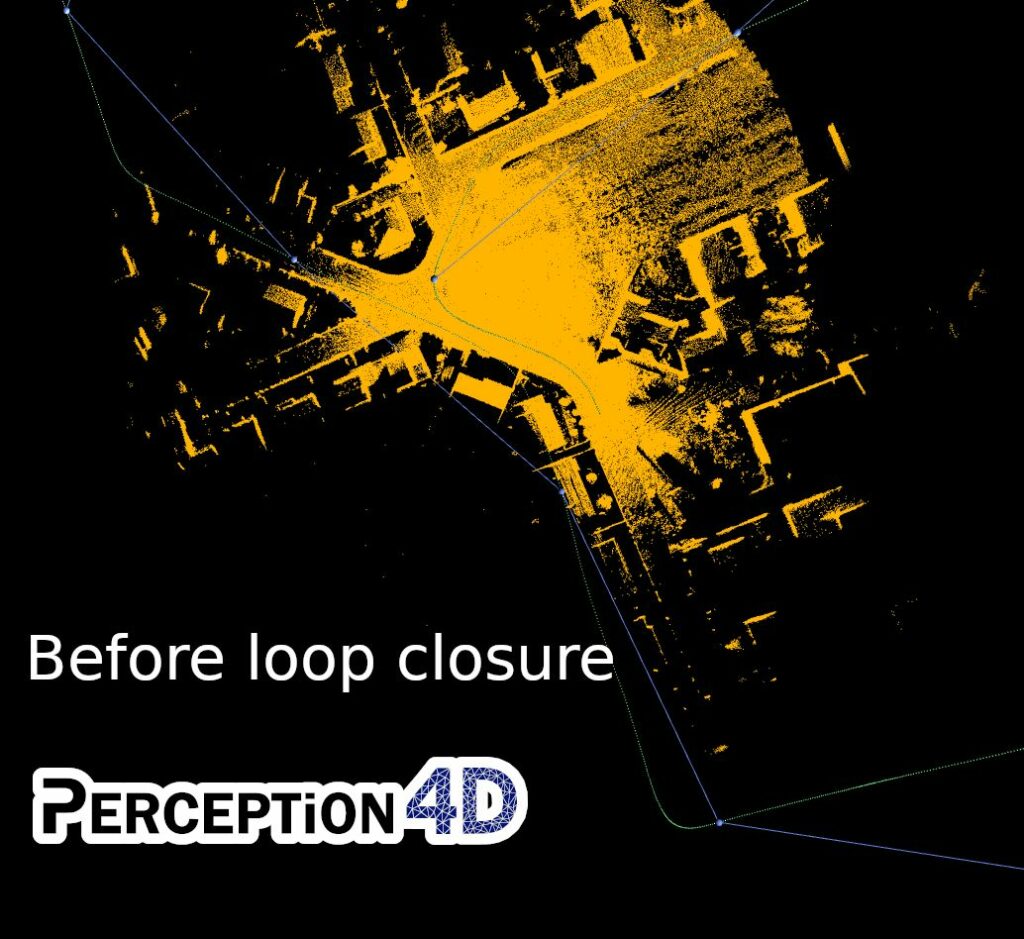

This project showcases how Perception4D’s expertise in 3D and 4D perception (3D + time) can elevate your projects in autonomous vehicles, mobile robotics, or any industrial applications using 3D sensors, (LiDAR, RGBD cameras, etc.).

Working on something similar? Let’s talk! We’d love to explore how we can collaborate to turn your 3D data into actionable solutions.

#LiDAR #ROS2 #AgriculturalRobotics #CANBus #3DPerception #SafetyInnovation #INRAE #PointCloud #AutonomousVehicles #3D #mobileRobot #RobotPerception